The Lethal Autonomy Dilemma

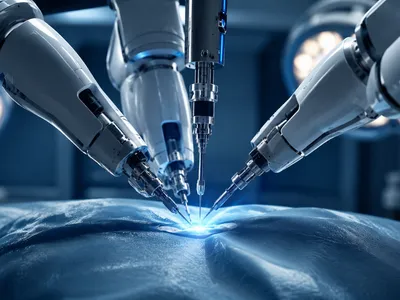

Delegating targeting decisions to algorithms removes humans from the immediate loop, creating a moral hazard and fracturing traditional notions of responsible command. Whether autonomous systems can reliably discriminate between combatants and civilians remains hotly debated, as critics highlight the inability of machines to grasp contextual nuance or exercise proportionality, while proponents emphasize potential precision free from human emotions.

The speed of engagement amplifies this dilemma: autonomous weapons may reduce collateral damage through advanced sensor fusion, but they also risk catastrophic failures that outpace human intervention. Operational advantage and ethical risk are tightly intertwined, underscoring the complex trade-offs of removing human judgment from life-and-death decisions.

Several states have begun outlining operational constraints to mitigate these risks. Below are key principles emerging from early policy frameworks that attempt to balance military necessity with moral accountability.

- 🧑✈️ Meaningful human control remains the central benchmark for legitimate autonomy.

- 🛑 Fail-safe design must ensure termination capability even under degraded communications.

- 📍 Strict geographic or temporal restrictions limit autonomous engagement to well-defined battlespaces.

- ✅ Pre-deployment certification requires rigorous testing against a wide spectrum of operational scenarios.

Without such constraints, the integration of lethal autonomy risks normalizing a form of warfare where accountability dissolves into complex chains of code. The challenge lies in creating governance structures that evolve as quickly as the technology itself.

Accountability Gaps in Warfare

When autonomous systems cause unlawful harm, assigning responsibility becomes legally and morally complex. Traditional chains of command presume a human decision-maker, but the rise of autonomous weapons creates an “accountability gap” where programmers, commanders, and operators may all claim plausible deniability, complicating liability under international humanitarian law.

Corporate development practices further blur responsibility, as private contractors design algorithms outside direct command, and sovereign states deploying these systems may lack awareness of biases or vulnerabilities. The doctrinal model of command responsibility fails because the “subordinate” is no longer a human capable of judgment, making it difficult to trace specific outcomes to individual actors or lines of code.

Institutional inertia and strategic competition exacerbate the accountability challenge. Rapid deployment prioritizes speed over oversight, creating a regulatory lag where autonomous technology advances faster than the legal frameworks intended to govern its use, threatening the deterrent effect of international humanitarian law.

Algorithmic Bias and Foreseeable Harm

Machine learning models inherit biases from their training data, which can turn lethal autonomous actions into potential violations of non-discrimination principles under international humanitarian law. Limited technical capacity among states to audit these systems creates a dangerous asymmetry in accountability between developers and users.

Operational realities complicate matters further: developers may not foresee all deployment contexts, while commanders often lack the expertise to detect subtle model failures. As a result, systems can be fielded with latent vulnerabilities that only surface under specific conditions, posing significant legal and ethical risks.

Algorithmic bias can cascade in contested environments—for example, computer vision models trained on limited datasets may fail in urban or adverse conditions, potentially causing systematic discrimination. Legal responsibility hinges on foreseeability, and emerging scholarship suggests strict liability models could address these gaps, though resistance persists. The central tension remains between rapid deployment and ensuring non-discriminatory, accountable outcomes.

The following table outlines common sources of bias identified in recent autonomous systems testing and their potential impact on compliance with targeting rules.

| Bias Source | Operational Context | Risk to Legal Compliance |

|---|---|---|

| Training data imbalance | Over‑representation of certain terrains or demographics | Potential violation of distinction principle |

| Sensor degradation | Adverse weather, smoke, or electronic warfare | Proportionality miscalculations |

| Feedback loop amplification | Reinforcement of targeting patterns through continuous learning | Systematic targeting errors without human override |

Addressing these risks requires pre-deployment certification standards that mandate testing across diverse scenarios. Without such standards, algorithmic bias remains a foreseeable harm that states may be obliged to prevent under existing treaty obligations.

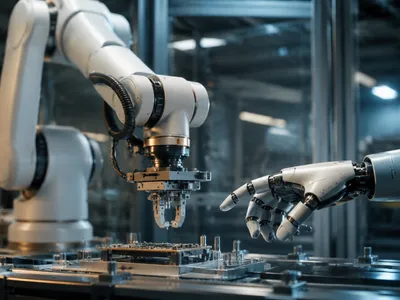

Human-Machine Teaming Imperatives

Maintaining meaningful human control requires interfaces that enable genuine judgment, not passive oversight, as operators often defer to machine recommendations due to automation bias. Effective human-autonomy teaming treats both as complementary cognitive agents, leveraging machine speed and precision while preserving human moral reasoning and contextual understanding.

Practical implementations benefit from adaptive autonomy that communicates confidence and uncertainty, fostering calibrated trust, and reducing both automation bias and operator workload. Techniques like dynamic task allocation allow machines to manage routine tasks while escalating ambiguous cases to humans. Early investment in human‑systems integration is critical to prevent brittle interfaces that compromise mission effectiveness and increase the risk of unlawful actions.

Toward Verifiable Ethical Frameworks

Meaningful ethical constraints on autonomous weapons require more than high‑level principles; they demand technical mechanisms for verification. Without the ability to audit systems before and during deployment, accountability remains aspirational rather than operational.

The following table outlines three essential components of a verifiable framework, each addressing a distinct stage of the system lifecycle.

| Verification Component | Purpose | Technical Enabler |

|---|---|---|

| Pre‑deployment certification | Ensure compliance before fielding | Formal methods, adversarial testing |

| Runtime monitoring | Detect anomalies during operations | Performance telemetry, kill‑switch logic |

| Post‑mission forensic audit | Enable after‑action accountability | Immutable logs, explainable AI interfaces |

These mechanisms collectively shift the debate from whether autonomy can be ethical to how ethics can be embedded into engineering practice. Transparency requirements and independent oversight bodies are equally critical to ensure that verification is not merely a self‑serving exercise by developers.

- 📝 Mandatory third‑party audits before any system receives operational approval.

- 🔍 Open‑source benchmarks for testing discrimination and proportionality in varied environments.

- 🌐 Binding international standards that prohibit deployment without verifiable safeguards.

- 🔄 Continuous retraining protocols that require human review of algorithmic drift.

Implementing ethical AI frameworks faces institutional hurdles, as verification imposes significant costs and may conflict with the speed of autonomy adoption. States may resist independent oversight for sovereignty or proprietary reasons, leaving commitments hollow without credible mechanisms. While the technical community has demonstrated that explainable AI, formal verification, and tamper‑evident logging are feasible, the key challenge is political will to embed these tools into binding agreements, transforming ethical AI from aspiration into verifiable operational reality.

Preventing a Strategic Stability Crisis

The proliferation of autonomous military systems threatens to destabilize the strategic balance between nuclear powers. Perception dilemmas arise when one state cannot distinguish between an adversary’s autonomous reconnaissance and a pre‑emptive strike, increasing the risk of accidental escalation.

Crisis stability erodes further when decision timelines compress below human cognitive capacity. Leaders may face pressure to launch or retaliate based on machine‑generated warnings, knowing that any hesitation could allow an opponent’s autonomous systems to achieve irreversible advantage. The danger lies in structural uncertainty—not merely in the technology itself, but in the absence of shared norms about its use. Confidence‑building measures that mirror Cold War arms control, such as pre‑notification of autonomous exercises and launch‑on‑warning prohibitions, offer a pathway to mitigate these risks before they become irreversible.