Collaborative Robot Evolution

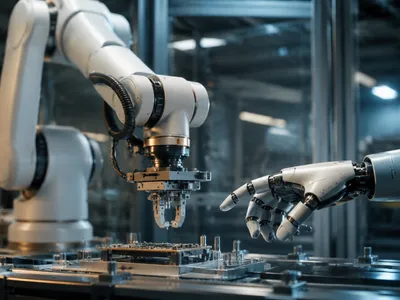

The paradigm of human-robot interaction within industrial settings has fundamentally shifted from segregated operations to seamless collaboration. Early industrial robots were confined to safety cages, but the latest generation of collaborative robots or cobots operates alongside human workers without traditional barriers.

This evolution is powered by advanced force-sensing technologies and reactive control algorithms. These systems allow a robot to detect unexpected contact and halt or retract motion instantly, ensuring human safety. The economic barrier to automation has also lowered significantly.

Modern cobots are designed for easy programming, often through intuitive hand-guiding or graphical interfaces, reducing the need for specialized robotics engineers. This flexibility enables their deployment in small-batch, high-mix production environments where traditional automation was not viable.

Their application spans tasks like machine tending, screw driving, and quality inspection. The result is a hybrid workstation where human dexterity and cognitive skill are augmented by robotic endurance and precision, creating a more productive and ergonomic work cell.

The integration of cobots necessitates a reevaluation of workstation design and process flow. It is not merely a substitution of manual labor but a re-engineering of the task itself to leverage the unique strengths of both human and machine agents in a shared workspace for optimal output.

Key technological drivers enabling safe collaboration include:

- Torque and Power Limiting: Embedded sensors monitor joint torque, allowing the system to limit force to biologically safe thresholds.

- Skin-like Sensor Coverings: Some advanced systems employ sensitive artificial skin that can detect proximity or light touch across the entire robot arm.

- Speed and Separation Monitoring: Using vision systems, the robot dynamically adjusts its speed based on the calculated distance to the human operator, maintaining a virtual safety zone.

The performance characteristics of different cobot models can be compared across several dimensions critical for manufacturing applications. This includes their reach, payload capacity, and inherent safety certification level.

| Cobot Model Class | Typical Payload (kg) | Reach (mm) | Primary Safety Standard |

|---|---|---|---|

| Light-duty | 3-5 | 500-800 | ISO/TS 15066:2016 |

| Medium-duty | 10-15 | 1000-1300 | ISO 10218-1:2011 |

| Heavy-duty | 20+ | 1500+ | ISO 10218-1 & PL d |

Enhancing Precision with AI-Powered Vision Systems

Robotic perception has moved beyond simple binary checks to sophisticated interpretation of complex visual scenes. The convergence of high-resolution 2D and 3D vision sensors with deep learning algorithms has created vision systems capable of handling extreme part variability and unstructured environments.

Traditional machine vision required meticulous lighting, fixed part presentation, and rigid programming. In contrast, AI-driven systems are trained on vast image datasets, learning to identify parts based on features that may be imperceptible to rule-based programming. This capability is transformative for bin picking and assembly verification.

A robot can now reliably locate and grasp randomly oriented parts from a bin, a task that was once a significant hurdle in automation. The system calculates an optimal grasp point and trajectory in real-time, adjusting for occlusions and positional uncertainty. This removes the need for expensive and space-consuming part feeders.

In quality control, these systems perform real-time defect detection with superhuman consistency, identifying micro-cracks, surface finish anomalies, or assembly errors. The neural network continuously improves its accuracy as more production data is fed back into the training model, creating a self-optimizing loop.

The architecture of such a system involves multiple coordinated components. The sensor data is processed through a convolutional neural network (CNN) for feature extraction and classification, while the spatial data is used to generate a precise point cloud for robotic guidance.

| Vision System Component | Function | Output for Robotics |

|---|---|---|

| 3D Time-of-Flight Camera | Measures depth by calculating light pulse travel time | Dense point cloud for object volume and orientation |

| Convolutional Neural Network (CNN) | Analyzes 2D image for feature recognition and classification | Part identity, defect label, confidence score |

| Point Cloud Registration Software | Aligns perceived point cloud with CAD model | Precise 6D pose (X, Y, Z, roll, pitch, yaw) |

Mobile Robotics and Logistics

The modern smart factory is a dynamic ecosystem where materials and components are in constant motion. Static assembly lines are being supplemented or replaced by fleets of autonomous mobile robots (AMRs) that transport items with unprecedented flexibility.

Unlike their predecessors, automated guided vehicles (AGVs), AMRs navigate using onboard LiDAR, cameras, and simultaneous localization and mapping (SLAM) software. This allows them to interpret their environment, avoid obstacles, and dynamically replan paths without requiring fixed tracks or magnetic tape.

This capability is critical for just-in-time sequencing in complex assembly processes, such as automotive manufacturing, where the correct part must arrive at the precise workstation at the exact moment of need.

The logistical optimization extends beyond mere transport; these systems are integrated with the factory's central Manufacturing Execution System (MES), allowing real-time tracking of every component's location and status. This creates a visible and responsive material flow, reducing work-in-progress inventory and minimizing line-side storage footprints. The robots themselves are becoming manpulation platforms, with some models incorporating lightweight robotic arms to perform simple pick-and-drop tasks at points along their route, further consolidating process steps.

The Rise of Digital Twins

A digital twin is a comprehensive virtual model that mirrors a physical manufacturing system in real-time. It transcends traditional simulation by creating a living, data-driven counterpart fed by sensors from the factory floor.

This technology enables predictive analysis and virtual commissioning of robotic cells before any physical build occurs. Engineers can stress-test production logic, identify cycle time bottlenecks, and validate safety protocols in a risk-free digital environment.

The bidirectional data flow is crucial; the physical system updates the twin, and insights from the twin can optimize the physical process. For robotics, this means programming paths, testing collaborative workflows, and even training AI control algorithms against vast synthetic datasets generated by the twin.

The implementation of a digital twin typically involves several integrated layers of technology, each serving a distinct function in creating the cyber-physical feedback loop. The fidelity of the model determines the scope and accuracy of the insights it can generate, from layout planning to predictive maintenance of robotic actuators.

By running "what-if" scenarios, such as introducing a new product variant or altering a robot's sequence, manufacturers can de-risk innovation and accelerate time-to-market. The twin becomes the single source of truth for the system's past performance, present state, and future potential.

| Digital Twin Layer | Description | Application in Robotics |

|---|---|---|

| Physical Entity | The real-world robot, sensors, and production environment. | Provides live data on position, torque, temperature, and cycle status. |

| Virtual Model | High-fidelity CAD and behavioral simulation model. | Enables offline programming, collision detection, and workflow simulation. |

| Data Linkage | IoT connectivity and data pipelines (sensors to cloud). | Synchronizes the virtual and physical states in near real-time. |

| Service Layer | Analytics, AI, and user interface applications. | Performs predictive maintenance, optimization algorithms, and visualizes KPIs. |

Human-Robot Synergy in Assembly

Advanced assembly tasks require a blend of force, dexterity, and cognitive judgment that pure automation struggles to replicate. The solution lies in synergistic workcells where humans and robots divide labor based on their innate capabilities.

A human operator might perform the complex, low-volume tasks like cable routing or connector mating, while a collaborative robot handles the repetitive, high-precision, or ergonomically challenging steps such as screw driving or applying adhesives. This division optimizes the overall cycle time and product quality.

The technical foundation for this synergy includes intuitive programming by demonstration and real-time task allocation. Systems now can iinterpret human gestures or even anticipate the next required part or tool, creating a fluid interaction that feels like collaboration with a skilled partner.

This shared workspace requires sophisticated sensor fusion to maintain safety and efficiency. Force-torque sensors on the robot ensure compliant assembly of delicate components, while vision systems track the human's actions to adapt the robot's behavior, creating a dynamic and responsive production environment that leverages the best of both biological and artificial intelligence.

The implementation of such cells has demonstrated measurable benefits across several operational domains, fundamentally changing the economics and flexibility of complex assembly operations.

- Ergonomic Risk Reduction Primary Benefit

- First-Pass Yield Improvement Quality Gain

- Operator Skill Amplification Productivity Multiplier

Sustainable and Adaptive Production

The next frontier for robotics in manufacturing aligns with global imperatives for sustainability and resilience. Robots are becoming key enablers of the circular economy through precise disassembly, sorting, and remanufacturing processes that were previously not economically feasible.

Adaptive robotic systems can handle the high degree of uncertainty in recycled material streams, identifying and separating components for reuse. This capability minimizes waste and reduces the dependency on virgin raw materials, directly contributing to a factory's environmental goals.

Energy efficiency is another critical dimension. Modern robotic drives and controls are designed for regenerative braking and low-power standby modes. Furthermore, the precision of robotic application in processes like painting or dispensing reduces material overspray and waste, leading to significant resource savings.

From an operational standpoint, the agility provided by robotic systems allows for rapid reconfiguration of production lines. This adaptive capacity is essential for responding to supply chain disruptions or shifting consumer demands. A single robotic cell can be reprogrammed overnight to produce a different product variant, embodying the principle of mass customization.

The convergence of robotics with data analytics creates a self-optimizing loop. Energy consumption, tool wear, and material usage data are continuously analyzed to identify further efficiency gains. This intelligent automation supports a strategic shift from rigid, high-volume production to flexible, responsible manufacturing that is both economically and environmentally sustainable.

This evolution positions smart factories not merely as centers of production but as responsive nodes within a larger sustainable ecosystem. The inherent precision, programmability, and data-generating capability of advanced robotics are indispensable for meeting stringent carbon targets while maintaining competitiveness, proving that operational excellence and environmental stewardship are synergistic objectives driven by technological innovation.