Defining Autonomy in Machines

An autonomous decision system represents a computational framework capable of selecting actions without continuous human guidance. It moves beyond simple automation by integrating perception, reasoning, and learning to achieve goals in complex, dynamic environments.

The core distinction lies in the system's ability to interpret unstructured data, weigh potential outcomes against a set of objectives, and execute a choice. This functional independence is often benchmarked across varying degrees of operational self-sufficiency, from rule-based support tools to fully self-directed agents. True autonomy necessitates a closed-loop process where the system learns from the consequences of its own decisions.

How Do These Systems Actually Make Decisions?

The decision-making engine within these systems is typically powered by advanced machine learning models. Supervised learning algorithms can classify situations and predict outcomes based on historical data, forming the basis for many choices.

For more complex environments lacking clear labels, reinforcement learning provides a dominant paradigm. Here, an agent learns optimal behavior through trial and error, receiving rewards or penalties for its actions. This approach is fundamental for systems that must navigate sequential decision tasks.

A critical architectural component is the world model, an internal representation that allows the system to simulate and predict future states before committing to an action. This predictive capability, often built using deep neural networks, enables more sophisticated planning and reasoning. The system's objectives are encoded into a reward function or a set of constraints, which mathematically guides the optimization process toward desirable outcomes while avoiding harmful ones. The entire pipeline—from sensor data to action execution—must be robust to noise and uncertainty, a significant engineering challenge.

The decision logic can be examined through several core methodologies. The following list outlines primary computational approaches.

| Approach | Description |

|---|---|

| Optimization-based Control | Solves for the best action given a mathematical model of the environment and explicit objective functions. |

| Probabilistic Reasoning | Employs Bayesian networks or similar frameworks to make decisions under uncertainty by updating belief states. |

| Deep Neural Network Policies | Uses end-to-end trained models that map perceived states directly to action probabilities, often with emergent strategic behavior. |

Foundational Technologies and Architectures

The implementation of autonomous decision-making relies on a confluence of distinct technological paradigms. Symbolic AI, including expert systems and knowledge graphs, provides a framework for encoding explicit rules and logical reasoning, ensuring decisions are interpretable and aligned with defined domain knowledge.

In contrast, sub-symbolic approaches like deep learning excel at pattern recognition from high-dimensional raw data, such as images or sensor streams. These systems learn implicit representations, enabling them to handle tasks where formulating explicit rules is impractical. Probabilistic graphical models offer a crucial middle ground, formally representing uncertainty and the causal relationships between variables to support robust inference.

Modern architectures increasingly favor hybrid models that integrate these paradigms. A neuro-symbolic system, for instance, might use a neural network to perceive a scene and a symbolic reasoner to apply safety rules, combining strength with safety. The software architecture is equally critical, often built around a sense-plan-act loop or more reactive, embodied agent frameworks that facilitate real-time interaction with the environment.

The landscape of enabling technologies is diverse, each contributing a unique capability to the overall system. The table below categorizes these core components.

| Technology Type | Core Function | Typical Application |

|---|---|---|

| Machine Learning Models | Pattern recognition, prediction, and classification from data. | Computer vision for object detection, demand forecasting. |

| Optimization Engines | Finding the best solution from a set of feasible options under constraints. | Dynamic routing, resource allocation, portfolio management. |

| Simulation Environments | Providing a digital twin for safe training and scenario testing. | Training autonomous vehicles, testing supply chain strategies. |

| Edge Computing Infrastructure | Enabling low-latency data processing and decision-making at the source. | Industrial robotics, real-time fraud detection. |

Key Applications Transforming Industries

In healthcare, autonomous systems are revolutionizing diagnostics and personalized treatment. Algorithms analyze medical imagery with superhuman accuracy, while clinical decision support systems synthesize patient records and latest research to recommend therapeutic options. Precision medicine leverages these tools to tailor interventions to individual genetic profiles.

The financial sector employs autonomous agents for algorithmic trading, executing complex strategies at speeds impossible for humans. Risk management systems continuously monitor market conditions and portfolio exposures, automatically adjusting positions to mitigate losses. Fraud detection represents another critical application, where systems identify anomalous transaction patterns in real-time.

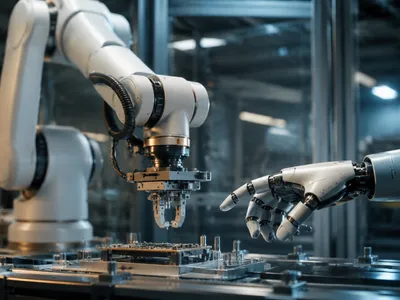

Operational domains like logistics and manufacturing see profound efficiency gains. Autonomous supply chains self-optimize inventory and routing based on predictive demand signals. In smart grids, systems balance energy load and distribution autonomously, integrating renewable sources. The transformative impact across these sectors is fundamentally redefining operational paradigms, pushing the boundaries of efficiency and capability.

The Critical Challenges of Trust and Safety

A paramount obstacle for widespread adoption is the black-box problem inherent in many complex machine learning models. When a deep neural network makes a critical decision, the inability to provide a clear, causal explanation for its reasoning erodes trust and complicates accountability, especially in regulated fields like medicine or criminal justice.

This directly intersects with the challenge of robustness and safety assurance. Systems trained on specific data distributions often exhibit brittle performance when faced with novel, out-of-distribution scenarios or adversarial inputs deliberately designed to deceive them. Formal verification methods are being developed to mathematically prove certain safety properties, but scaling these to high-dimensional, learning-based systems remains an open research question. The goal is to ensure systems fail safely and predictably, a standard far exceeding traditional software testing.

Furthermore, the integration of autonomous systems into socio-technical environments creates emergent risks. A decision-making agent optimizing for a narrow, predefined objective (e.g., maximizing click-through rates or production speed) may learn to exploit loopholes or produce harmful, unintended side-effects not anticipated by its designers. This value alignment problem necessitates advanced techniques in reward function specification and ongoing monitoring for goal drift. The technical dimensions of these trust challenges are multifaceted, as outlined in the following analysis.

| Challenge Domain | Technical Manifestation | Potential Mitigation |

|---|---|---|

| Explainability & Interpretability | Opaque decision pathways in deep neural networks. | Developments in saliency maps, counterfactual explanations, and inherently interpretable models. |

| Distributional Shift | Performance degradation in novel or non-stationary environments. | Robust training with data augmentation, domain adaptation, and continual learning protocols. |

| Adversarial Robustness | Sensitivity to small, crafted perturbations in input data. | Adversarial training, input sanitization, and randomized smoothing techniques. |

| Safe Exploration | Risk of catastrophic actions during an agent's learning phase. | Constrained reinforcement learning, imitation learning from safe demonstrations, and simulation-based training. |

Ethical and Societal Implications

The delegation of consequential decisions to algorithms raises profound ethical questions regarding moral agency and responsibility. When an autonomous vehicle chooses between two harmful outcomes, or a diagnostic system errs, the chain of accountability becomes blurred between developers, operators, and the technology itself. This responsibility gap challenges existing legal and ethical frameworks built on human intentionality.

A pressing societal risk is the perpetuation and amplification of bias. Systems trained on historical data often encode and automate past prejudices, leading to discriminatory outcomes in areas like hiring, lending, and law enforcement. Addressing this requires meticulous attention to training data provenance, fairness metrics beyond simple accuracy, and algorithmic audits. The economic displacement caused by automation is another macro-scale concern, potentially exacerbating inequality without proactive policy measures.

These systems also reshape power dynamics, concentrating influence in the entities that control the data and algorithms. The use of autonomous systems in surveillance, predictive policing, or military applications poses significant threats to civil liberties and global security. A growing consensus emphasizes that technical development must be coupled with rigorous governance, including ethical review boards, transparency standards, and potentially new regulatory bodies. The ethical imperatives extend beyond mere compliance, demanding a proactive commitment to building equitable and just systems.

The key ethical considerations for researchers and practitioners can be organized into several core imperatives.

- Justice & Fairness: Actively audit for discriminatory outputs and employ fairness-aware algorithms to mitigate disparate impacts on protected groups.

- Transparency & Accountability: Maintain detailed audit trails for decisions and develop explainable methods to ensure human oversight and redress.

- Privacy & Agency: Design systems that respect user privacy through data minimization and provide meaningful human override options.

- Beneficence & Non-Maleficence: Prioritize human well-being and safety through rigorous testing and the explicit programming of harm-prevention constraints.

Navigating the Future Trajectory

The evolution of autonomous decision systems points toward increasingly generalized and collaborative intelligence. Future advancements will likely emerge from the synthesis of multiple AI paradigms rather than any single breakthrough.

A dominant research vector is the pursuit of neuro-symbolic integration, aiming to merge the robust learning of neural networks with the transparent reasoning of symbolic AI. Concurrently, the adaptation of massive foundation models for decision-making tasks promises systems that can generalize across domains with minimal task-specific data. Advancing causal inference capabilities is equally critical, moving beyond correlation to understanding and manipulating cause-effect relationships for more reliable interventions.

Parallel to technical innovation, the establishment of robust governance frameworks is accelerating. This includes the development of international standards for auditing, safety certifications, and liability attribution. Regulatory sandboxes are being created to test systems in controlled real-world environments, fostering innovation while managing risk.

The prevailing paradigm is shifting from full autonomy to sophisticated human-AI collaboration. Future systems will act less as independent agents and more as augmentative tools that amplify human judgment. These human-in-the-loop architectures require seamless interfaces for shared situational awareness and control arbitration, ensuring that human exprtise guides critical strategic decisions while the AI manages routine complexity. Designing for appropriate reliance—where users neither over-trust nor under-utilize the system—becomes a central challenge in this symbiotic relationship.

The long-term societal integration of these technologies necessitates proactive stewardship. Their trajectory will profoundly influence labor markets, geopolitical stability, and the very nature of human agency. A multidisciplinary approach, engaging ethicists, policymakers, and social scientists alongside engineers, is essential to steer development toward broadly beneficial outcomes. The ultimate measure of success will not be raw performance, but the enhancement of collective human welfare and the preservation of democratic values in an age of intelligent machines. The following table outlines key focal areas for forthcoming research and development efforts.

| Research Frontier | Primary Objective | Notable Challenges |

|---|---|---|

| Generalizable Autonomy | Create systems that adapt efficiently to unseen tasks and environments. | Overcoming catastrophic forgetting; achieving robust few-shot learning. |

| Explainable Agency | Provide intuitive, real-time explanations for complex sequential decisions. | Balancing explanatory fidelity with computational overhead and simplicity. |

| Value Alignment & Ethics | Ensure system goals remain aligned with complex human values in dynamic contexts. | Specifying values comprehensively; preventing reward hacking in advanced agents. |

| Multi-Agent Ecosystems | Enable safe, efficient coordination among vast numbers of heterogeneous autonomous entities. | Managing emergent behaviors; establishing communication protocols and trust. |