The Data Deluge

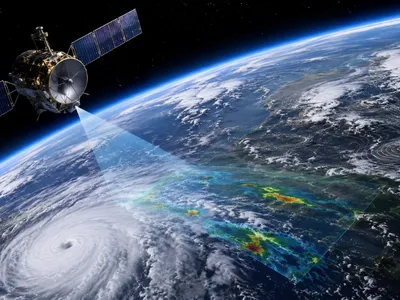

Modern meteorological sensors generate an unprecedented volume of observations. Satellite constellations, radar networks, and in-situ probes produce petabytes of data daily.

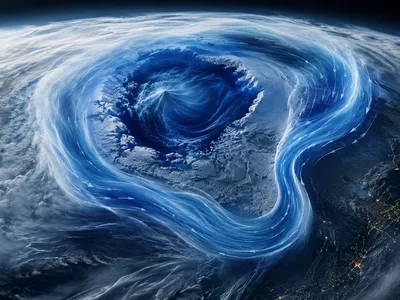

Each storm system embeds intricate spatial and temporal signatures across multiple scales. Machine learning models ingest this complexity directly, transforming raw signals into structured representations that human analysts cannot manually process.

The challenge lies not in data scarcity but in extracting predictive patterns from heterogeneous sources. Satellite radiances, lightning arrays, and Doppler wind fields must be fused into a unified analytical framework. By training on decades of reanalysis products, neural networks learn to prioritize the most thermodynamically relevant features—often identifying precursors that traditional parametric models overlook. This capability shifts the bottleneck from data collection to algorithmic architecture, where deep learning encodes physical priors directly into latent spaces.

Why Traditional Models Struggle with Complexity

Traditional numerical weather prediction models approximate fluid dynamics using discretized equations, which smooth over sub‑grid processes like convective initiation and microphysical interactions. Parametrization schemes, such as entrainment rates in cumulonimbus clouds, introduce systematic biases, while boundary layer turbulence and aerosol‑cloud interactions add further uncertainty, even in high-resolution ensemble simulations. Atmospheric Science Insights Into Weather Extremes show that these limitations highlight why AI introduces a transformative approach by learning from observational histories.

AI introduces a transformative approach by learning from observational histories. Graph neural networks track Lagrangian trajectories, preserving coherent structures lost in Eulerian grids, and when combined with physics-based solvers, they reduce spin-up time and enhance extreme-event localization. This hybrid framework bridges scales that models cannot resolve while maintaining physical conservation laws through differentiable programming.

Physical Constraints

Embedding physical laws into neural architectures remains a fundamental challenge. Conservation of mass, momentum, and energy must be respected to avoid unphysical extrapolations in storm-scale predictions.

Hard constraints implemented via differentiable solvers enforce these principles, yet they increase computational overhead and require careful discretization. Hybrid models that balance data‑driven flexibility with rigid physical priors often outperform purely empirical or purely numerical approaches in capturing mesocyclone evolution.

| Constraint Type | Implementation in AI | Impact on Storm Prediction |

|---|---|---|

| Mass Continuity | Divergence‑free layers | Prevents spurious pressure tendencies |

| Momentum Conservation | Hamiltonian neural networks | Preserves vorticity structures |

| Thermodynamic Consistency | Entropy‑based loss terms | Reduces biases in CAPE estimates |

Recent architectures incorporate these constraints through physics‑informed loss functions that penalize violations during training. This approach yields physically plausible storm trajectories even when observational data are sparse, particularly for supercell environments where traditional parameterizations often fail.

How AI Learns the Language of Storms

Atmospheric flows encode their evolution through multi‑scale spatial patterns and temporal sequences. Deep learning models treat these signals as a language—discovering hierarchical representations that map raw radar reflectivity to latent dynamical states.

Transformers applied to storm tracking learn long‑range dependencies between upper‑level divergence and surface mesocyclogenesis. Self‑attention mechanisms identify which precursor features (e.g., mid‑level rotation, differential heating) most strongly influence subsequent intensification. This language analogy extends to multimodal fusion, where satellite imagery, lightning data, and radar volumes are encoded into a shared embedding space.

The following techniques illustrate how AI architectures parse the structural grammar of convective systems:

- 🌐 Spatial transformers – Capture non‑local interactions in storm organization

- ⏱️ Temporal convolutional networks – Extract phase‑space trajectories from radar sequences

- 📊 Graph attention networks – Model interactions between storm cells as dynamic graphs

By training on thousands of storm lifecycles, these models internalize the probabilistic grammar of severe weather. The resulting representations enable probabilistic nowcasting with calibrated uncertainty, a capability that surpasses deterministic extrapolation techniques in operational settings.

Pattern Emergence

Deep learning models are highly effective at detecting coherent structures that signal rapid storm intensification. Rotational signatures in Doppler radar often appear hours before tornadic vortices form, and neural networks trained on diverse storm morphologies learn to separate transient noise from reliable precursors. These architectures capture complex interactions between vertical wind shear, instability gradients, and orographic effects that traditional deterministic models struggle to resolve.

Attention mechanisms highlight the spatial regions most influencing forecast uncertainty, with supercell updrafts, storm‑relative helicity, and cold pool boundaries serving as key decision nodes. Validated across independent events, these patterns generalize well to new regions and storm types, offering adaptive intelligence for rare, high‑impact events where historical data are limited.

Bridging Prediction and Practical Application

Operational meteorology now leverages AI to produce ensemble‑conditioned nowcasts, which provide probabilistic forecasts while remaining actionable for emergency managers. These systems communicate uncertainty clearly, enhancing decision-making in high-stakes situations.

Real-time deployment demands resilient handling of missing data, sensor calibration shifts, and computational delays. Edge‑optimized models running on GPU‑accelerated workstations deliver high-resolution guidance within the crucial 0‑to-6-hour window, ensuring timely support for operational centers.

Transitioning AI from research to operations relies on strict testing against standard numerical models. Verification metrics like spatial probability of detection and false alarm ratios inform iterative improvements, while interpretability tools show why storms are flagged for rapid intensification, fostering trust among forecasters and aligning outputs with public safety needs.

Hybrid systems that combine AI products with human expertise have proven superior to either approach alone. Machine learning handles routine pattern recognition, allowing analysts to focus on complex reasoning, while collaborative human‑AI decision frameworks expand scalability and adaptability, with transfer learning and online updates helping models adjust to new regions and evolving climatic conditions.